When Speed Becomes Contagion: How AI Turns Local Shocks into Systemic Risk

Published

Modified

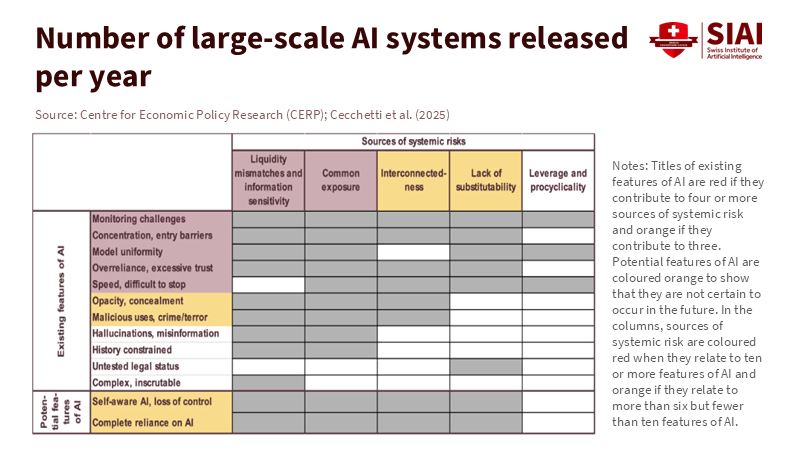

AI turns rumors into instant, system-wide stress Shared models and platforms cause herding and correlated errors Use timed frictions, model diversity, and critical-hub oversight

The most important number in finance right now is 42 billion. That's how much money one US bank saw disappear in a single day in March 2023. Another $100 billion was lined up to leave the next morning. This wasn't an old-school bank run with people lining up outside. It happened through phones, online feeds, and electronic transfers. This is how fast things can go wrong now. And it's super important to keep this in mind when we think about the risks AI creates for the financial system. AI speeds up the creation, sharing, and action on information. It can turn a rumor into a decision and a decision into a cash flow really fast. This speed is what turns normal little problems into system-wide panic. If we keep thinking of AI as just something individual companies use, rather than a connected system, we're going to get the risk wrong.

AI Risk in Finance: What Now Moves Together

The old way of thinking assumed that things slowed down between a bad story and its reaction. AI gets rid of that slowdown. It makes finding patterns, automating decisions, and coordinating actions faster because everyone's using the same information, models, and platforms. This means everything is really tightly linked together. Depositors and traders see the same stuff at the same time and use similar tools to react. Back in March 2023, that bank lost $42 billion in eight hours, and $100 billion was waiting to get out the next day. Regulators later realized that social media and online channels made the problem much worse. That speed isn't unusual anymore; it's just how things are with AI risk in finance when things go bad.

It's not just about speed; it's that everyone's doing the same thing. Lots of companies now use similar AI services, cloud services, data providers, and model designs. Regulators are warning that this can cause everyone to act the same way and make the same mistakes, especially if the models are hard to understand or poorly managed. If you add AI-powered news feeds and ad targeting, a random social media post can cause people to pull their money out of a bank, or a bad risk score can cause everyone to sell at once. The way modern finance is set up – with all these connections – turns acting alike into acting fast. That's why we need to manage AI risk in finance like a network issue, not just an issue with individual models.

Five Things That Cause AI Risk in Finance

First, AI-driven fake news can now actually hurt a company's bottom line. The bank runs in 2023 showed how social media and online banking accelerated the process. Banks with high Twitter activity lost more money when SVB failed, revealing their stress in real time. Payment data show that at least 22 US banks saw people withdraw a large amount of money that month. New tests show AI-generated false claims about a bank's safety, spread through cheap ads, can really make people want to move their money. Almost 60% of people surveyed said they'd think about moving their money after seeing something like that. Deepfakes make it even easier to trick companies. Earlier this year, a finance employee was tricked into sending about $25 million to scammers by a deepfake video call. The main point is: AI in finance means bad news doesn't just scare the markets; it moves money.

Second, if everyone uses the same models, they're more likely to make the same mistakes. The idea is simple: if everyone's connected and getting the same information, it can cause a domino effect. What's new is that everyone may be using the same kinds of models, data, and tech companies. Regulators are warning that AI can make everyone act the same way, making things more fragile, especially if the models are hard to understand and use data that is similar. As AI systems shape news and even internal memos, the same explanations pop up everywhere. Credit, risk, and trading teams then react in unison. This can cause the whole market to swing in one direction. When things change, money can disappear fast. This isn't theoretical. This is how AI risk in finance is most likely to show up – quietly at first and then exploding when things get stressful.

Third, if a few companies have a lot of control and everyone relies on their stuff, it creates single points of failure. AI runs on a small number of giant cloud and model platforms, often owned by the same companies. Authorities are starting to regulate these companies. The UK's rules for these companies went into effect this year. The EU is now directly monitoring essential tech providers and has designated central cloud and data firms as critical to the financial sector. This is because if a major vendor has a problem, it can spread to lots of institutions at once. If everyone's using the same AI tools, a simple failure or cyberattack can cause market-wide issues even if the banks themselves do everything right.

Fourth, if the data and models are weak, it can cause widespread errors. AI systems are only as good as the data and security they have. National standards groups are warning about hacking techniques that can secretly change results or steal information. Explainability remains limited for many advanced models. This leads to mistakes, especially when things change. Earlier this year, the UK warned that hacking risks might never be fully fixed in current AI models. This means the risk has to be managed. If several institutions use similar models and data and face the same hacking attempts, they'll make the same mistakes. AI risk then goes from being small to being huge – thousands of small, fast errors that all point in the same direction.

Fifth, speed and money are colliding. AI shrinks the time between getting a signal and settling a transaction. This is helpful when things are calm, but risky when things are stressful. What happened in March 2023 is now the example: one bank lost about a quarter of its deposits in a day. Also, deposit amounts and market values fluctuated throughout the day in response to what people were saying online. European authorities are learning the same thing: instant payments and mobile banking mean bank runs happen in hours, not days. In the markets, AI tools can cause everyone to sell at once, leading to low prices. The reason is simple: when everyone acts fast and in the same way, there isn't enough money to go around. This is the core of AI risk in finance.

What to Do About AI Risk in Finance

The first thing we need is a rule for handling transactions when rumors are spreading. Faster isn't always safer. The goal isn't to stop speed but to control it. A memo suggested common-sense rules: slow down big withdrawals when regulators are about to make an announcement, pause automated selling programs when there are information circuit-breaker alerts, and require banks, platforms, and authorities to track down rumors in real time. The same idea should apply to both regular customers and businesses. If AI risk grows when everyone uses the same fast tools at once, the solution is to slow things down for a bit so authorities have time to determine what's true and keep things stable.

The second thing is to strengthen the systems that make AI common. Watch critical third-party companies and ensure they're resilient, secure, and able to recover from problems. Run exercises that involve the entire sector, assuming that models have been compromised or that cloud services are down. Map out the shared connections, not just who uses which vendor. Require institutions to have multiple models for critical functions, with distinct data and decision-making processes, to reduce the risk of everyone doing the same thing. Update stress tests to include AI-driven fake-news scenarios with short deadlines and deposit losses, based on events in 2023. Regulators should also require official statements to be verified with digital signatures so that platforms and media can stop fake news in real time.

What Teachers and Leaders Should Do Next

Courses need to keep up with how AI changes risk in finance. Teach the new math of bank runs. Show how things like uninsured deposits, social media, and instant payments combine to cause money to flow out of banks. Assign readings that cover finance, cybersecurity, and communications. Students should look at the 2023 events to see the speed and how stress is spread. They should simulate rumor-driven panics and then see how slowing down transactions and sending explicit messages from regulators changes the outcome. The goal isn't to scare people; it's to prepare them.

Within institutions, leaders should develop joint plans across teams such as treasury, risk, communications, legal, and security. These teams need to quickly spot fake media, send verified statements across channels, and work with platforms to remove harmful phony news. They should also check their own AI systems for shared weaknesses, such as identical models, data vendors, or setups. Test AI agents against hacking. Maintain break-glass manual options for essential flows. This doesn't slow down innovation. It keeps it going by making sure that one clever hack or one lie can't bring the whole system down.

The $42 billion day isn't just a number to remember. It's a constraint. We have a financial system where information moves at model speed, and money follows right behind. That's not going to change. What can change is how we handle the stress. AI risk in finance is a network problem: standard tools, data, vendors, and stories. The solutions need to be network-aware: slowing money flows when rumors spread, supervising key infrastructure, using different models for important decisions, and sending fast, verified messages to fight fake news. Teachers should train for these situations. Policymakers should create rules and test them. Bank leaders should practice them. We don't have to accept that every rumor turns into a bank run. If we build safeguards that work at the speed of AI, then the next $42 billion day can be something we've moved past.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of the Swiss Institute of Artificial Intelligence (SIAI) or its affiliates.

References

Banque de France. (2024). Digitalisation—A potential factor in accelerating bank runs? Bloc-notes Éco.

Bank of England; Prudential Regulation Authority; Financial Conduct Authority. (2024). Operational resilience: Critical third parties to the UK financial sector (PS16/24).

Bank for International Settlements. (2024). Annual Economic Report 2024, Chapter III: Artificial intelligence and the economy: Implications for central banks.

Bank for International Settlements—Financial Stability Institute. (2023). Managing cloud risk (FSI Insights No. 53).

California Department of Financial Protection and Innovation. (2023). Order taking possession of property and business of Silicon Valley Bank.

Cipriani, M., La Spada, G., Kovner, A., & Plesset, A. (2024). Tracing bank runs in real time (Federal Reserve Bank of New York Staff Report No. 1104).

Cookson, J. A., Fox, C., Gil-Bazo, J., Imbet, J.-F., & Schiller, C. (2023). Social media as a bank run catalyst (working paper).

European Systemic Risk Board—Advisory Scientific Committee. (2025). AI and systemic risk.

Financial Stability Board. (2024). The financial stability implications of artificial intelligence.

Fenimore Harper & Say No to Disinfo. (2025). AI-generated content and bank-run risk: Evidence from UK consumer tests.

Federal Reserve Board Office of Inspector General. (2023). Material loss review: Silicon Valley Bank.

National Institute of Standards and Technology. (2023). AI Risk Management Framework (AI RMF 1.0).

National Institute of Standards and Technology. (2024). Generative AI: Risk considerations (NIST AI 600-1).

National Cyber Security Centre (UK). (2025). Prompt injection is not SQL injection (it may be worse).

Reuters. (2024). Yellen warns of significant risks from AI in finance.

Reuters. (2025). EU designates major tech providers as critical third parties under DORA.

Swiss Institute of Artificial Intelligence. (2025). Digital bank runs and loss-absorbing capacity: Why mid-sized banks need bigger buffers.

VoxEU/CEPR Press. (2025). AI and systemic risk.

World Economic Forum. (2025). Cybercrime lessons from a $25 million deepfake attack.

Comment