When Cheap Money Stops Working: Why the Zero Lower Bound no longer revives Europe’s economy

High public debt is weakening the power of the zero lower bound in Europe When fiscal credibility erodes, lower interest rates no longer guarantee stronger growth or stable inflation Europe must rebuild fiscal space and institutional trust if monetary policy is to work again

Copyright’s Quiet Pivot: Why AI data governance — not fair use — will decide the next phase of legal rules

Copyright’s Quiet Pivot: Why AI data governance — not fair use — will decide the next phase of legal rules

Published

Modified

AI copyright disputes are shifting toward strict AI data governance and data provenance scrutiny Settlements and licensing deals now shape the legal landscape more than courtroom doctrine The future of AI regulation will depend on verifiable governance, not abstract fair-use theory

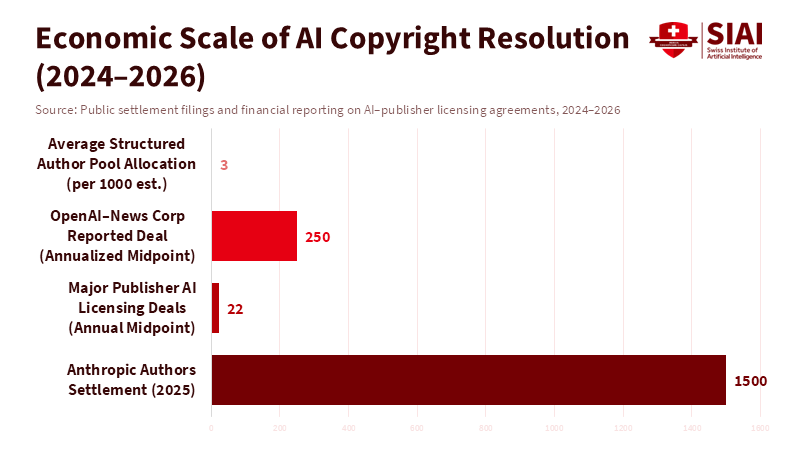

When authors and publishers went to court with AI developers, everyone thought we'd get clear answers about if it's okay to train AI using copyrighted stuff. But that's not how it's turning out. By late 2025, a big case didn't end with some fancy legal rule, but with a deal: a judge gave the thumbs up to a roughly $1.5 billion agreement between authors and an AI company. The issue was that the AI allegedly used about 465,000 books to train its models. That huge number tells us something important: not knowing the rules is costing people a lot. So, companies, publishers, and even the courts are starting to focus on something simple: can you prove where the data came from, how you got it, and that it's legit? They're not trying to make some grand statement about fair use. This isn't some legal trick. It's a big change in how we handle AI data, which is becoming the key to being responsible.

AI Data Management and the Changing Legal World

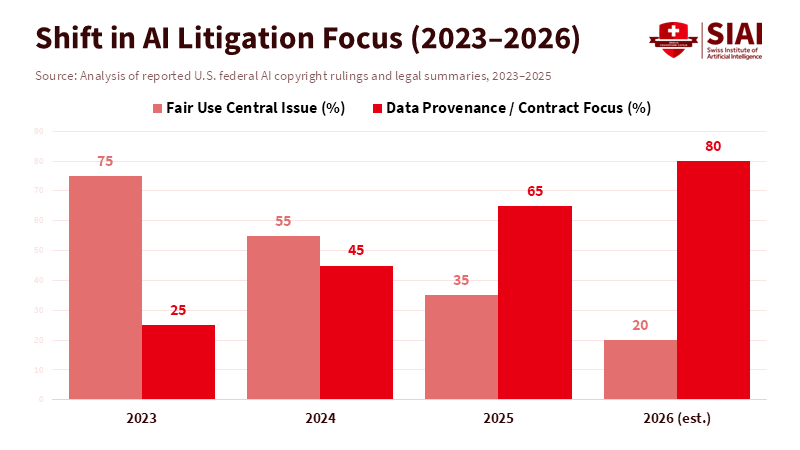

Previously, people thought copyright and AI were a simple yes-or-no question: is using copyrighted material to train AI fair, or is it against the law? That question sparked significant debate among experts and a few court opinions, but it didn't really help businesses figure things out. But in 2024 and 2025, courts began examining the same facts in a new way. Judges wanted real proof about where the data came from, what agreements covered it, whether anyone was using pirated materials, and whether you could trace what the AI produced back to specific copyrighted works. These aren't just abstract legal ideas; they're practical things companies can check. And that's important because companies can take action on this. They can maintain records, conduct audits, obtain licenses, and demonstrate the source of their data. They can't go back in time and change the Constitution. Companies with strong AI data management will face less legal risk and have a better reputation than those that don't.

According to AP News, some rights holders are now seeking to monetize through licensing agreements and business deals rather than relying solely on uncertain and lengthy court cases. And the market is reacting. By mid-2025 and into 2026, major publishers and news services were entering into business agreements with AI developers. These big deals showed everyone that there's money to be made. For AI developers who have billions on the line, paying to get data legally or at least showing they have a good reason to believe their data is legit is better than risking a court decision that cuts them off from important data. The legal fight is shifting from "Is this legal?" to "Can you prove where you got this?" And that's where AI data management comes in.

AI Data Management: Agreements, Licenses, and What the Market is Saying

The numbers are pretty amazing and tell us a lot. A settlement of approximately $1.5 billion in 2025, which was agreed upon rather than won in court, isn't just about getting paid back. It's a sign of what things are worth. It tells the people in charge of risk, the people on the board, and the people buying AI what it might cost if they don't follow the rules, and how valuable it is to have a data supply chain that you can trust. At the same time, large licensing deals between publishers and AI companies began to emerge, and the reported prices indicate that the market is rising. Major publishers are reportedly receiving millions of dollars each year for allowing AI companies to use their content, and one deal was reportedly worth $20–$25 million annually. These numbers aren't always clear, and many agreements aren't public, but the trend is evident: rights holders are getting money through contracts while the legal disputes are more about the specific facts of each case.

The courts are also helping this trend. Throughout 2025, several court decisions emphasized the details of how data was changed, accessed, and where it came from, rather than making broad general rules. In other words, the courts often didn't make a big statement about fair use, but they did make it clear that they wanted to see proof of how the data was obtained and used. This creates a predictable legal situation: if judges require proof of data origin, those who can provide it have greater leverage in negotiations, and those who can't are more likely to settle. For the people making the rules and for institutions, this means that you can prevent problems and measure how well you're doing. If a company keeps track of who gave them data, what agreements covered it, what changes were made, and how they verify that copyrighted material isn't being copied, they'll be in a better position to avoid expensive settlements or being told to stop.

AI Data Management for Schools and Organizations

If courts and the market agree that it's important to know where data comes from and to have clear agreements, then schools and universities need to make AI data management a top priority. Universities and educational companies are in a unique position: they create valuable training materials (such as lesson plans, research, and lecture recordings) and rely heavily on data from others. A good management plan should have at least three things. First, know what you have and where it came from: every dataset used in a model should be logged with basic information such as its source, licensing terms, capture date, and whether permission was granted. Second, have clear agreements: licensing and data-sharing agreements should state that model training is permitted and specify who gets credit, how funds will be shared, and how the data can be used. Third, be able to audit and control changes: schools should use reliable systems that record data changes and allow external auditors to verify that copyrighted content wasn't copied without permission.

These are real steps, not just good ideas. For example, a textbook company considering partnering with an AI company can determine whether offering a licensed feed for model training will generate more revenue than it costs to manage the rights. Similarly, a university developing its own AI tools can use models trained on data it can verify as clean, reducing its insurance costs, legal fees, and reputational risk. The government can help by supporting shared resources such as licensing marketplaces, standardized methods for recording data origins, and integrated audit tools. These shared resources lower costs and reduce the temptation to take advantage of the system while still protecting creators' rights. To put it simply, good AI data management is also good for the economy.

AI Data Management: Addressing Concerns and Establishing Norms

Some worry that settlements and private licensing will only benefit big companies and create a system in which only those who can pay get access, thereby limiting the flow of information. That's a valid concern. If only big publishers can profit from their archives, smaller creators might be left out, which could harm the public interest. But the alternative – a free-for-all where everyone grabs data without permission, leading to lawsuits – has its own problems: it erodes trust, causes unpredictable takedowns, and leads to models trained on unreliable data. The solution isn't one or the other. It needs to combine market contracts with public safeguards. That means being watchful for antitrust issues to prevent licensing that excludes others, supporting exemptions for research under clear rules, and requiring transparency so that outsiders can check whether models use licensed or unlicensed content.

Some people argue that management rules will be circumvented or become so costly that they slow innovation. The answer is to adjust based on the evidence. Simple, cheap ways to show where data came from – like a register of datasets that machines can read and a tiered licensing system – can get most of the benefits of compliance without a lot of red tape. When private markets don't provide broad access for research and education, public money can create curated, licensed datasets for non-commercial use. And the courts will still be important. Narrow rulings that focus on the facts of each case and emphasize where data came from aren't a replacement for laws, but they do influence how the market behaves. The combination of court oversight, negotiated contracts, and policy support makes it harder to claim ignorance as a defense and more appealing to build compliance into product design from the outset.

The $1.5 billion settlement is a crude measure that signals a more delicate situation: legal disputes over AI are shifting from abstract arguments to real-world actions. For creators, companies, and schools, the main question has become whether models can prove, with records and contracts, where their data came from and how it was handled. That's what AI data management provides. Policymakers should stop asking only whether training is fair in theory and start setting minimum standards for data provenance, funding shared licensing resources, and protecting non-commercial research channels. Schools should manage their archives, document them, and, when appropriate, monetize them under standard terms. Industry should use data formats that work together and independent audit systems. If we do this, the market will reward clarity. If we don't, we'll end up with costly settlements, broken norms, and a legal environment that discourages innovation rather than guides it. The challenge ahead isn't just legal or technical. It's a management problem that we can solve – if we create the systems to prove it.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of the Swiss Institute of Artificial Intelligence (SIAI) or its affiliates.

References

Associated Press (2025) ‘Judge approves $1.5 billion copyright settlement between AI company Anthropic and authors’, Associated Press, 2025.

Debevoise & Plimpton LLP (2025) AI intellectual property disputes: The year in review. New York: Debevoise & Plimpton.

Digiday (2026) ‘A 2025 timeline of AI deals between publishers and tech’, Digiday, 2026.

Engadget (2025) ‘The New York Times and Amazon’s AI licensing deal is reportedly worth up to $25 million per year’, Engadget, 2025.

European Parliament (2025) Generative AI and copyright: Training, creation, regulation. Directorate-General for Citizens’ Rights, Justice and Institutional Affairs. Brussels: European Parliament.

IPWatchdog (2025) ‘Copyright and AI collide: Three key decisions on AI training and copyrighted content’, IPWatchdog, 2025.

JDSupra (2025) ‘Fair use or infringement? Recent court rulings on AI training’, JDSupra, 2025.

Meta Platforms, Inc. & Anthropic PBC litigation reporting (2025) ‘Meta and Anthropic win legal battles over AI training; the copyright war is far from over’, Yahoo Finance, 2025.

Pinsent Masons (2025) ‘Getty Images v Stability AI: Why the remaining copyright issues matter’, Pinsent Masons Out-Law, 2025.

Reuters (2024) ‘NY court rejects authors’ bid to block OpenAI cases from NYT, others’, Reuters, 2024.

Reuters (2024) ‘OpenAI strikes content deal with News Corp’, Reuters, 2024.

Skadden, Arps, Slate, Meagher & Flom LLP (2025) Fair use and AI training: Two recent decisions highlight fact-specific analysis. New York: Skadden.

TechPolicy Press (2025) ‘How the emerging market for AI training data is eroding big tech’s fair-use defense’, TechPolicy Press, 2025.

Similar Post

When Cheap Becomes Contagious: China’s Deflation Spillover and the New Financial Faultline

China’s domestic deflation is no longer contained; it now reshapes global prices, profits, and financial risk Industrial subsidies extend price pressure, turning a trade shock into a systemic financial spillover Global policy must adapt quickly to manage a deflationary force emanating from the world’s manufacturing center

Rewiring Appetite: How Multi-Pathway Obesity Drugs Redefine Clinical and Educational Priorities

Appetite is not a single switch but a network that can now be powerfully overridden Multi-pathway obesity drugs deliver record weight loss while introducing new biological risks Policy and education must adapt as fast as the science does

The Super Bowl Is PR — The Enterprise Buys the Orchestration Layer

The Super Bowl Is PR — The Enterprise Buys the Orchestration Layer

Published

Modified

Enterprise AI competition is decided inside procurement systems, not public ad campaigns The real battle is over who controls enterprise AI orchestration and workflow integration Governance, interoperability, and institutional trust now matter more than model branding

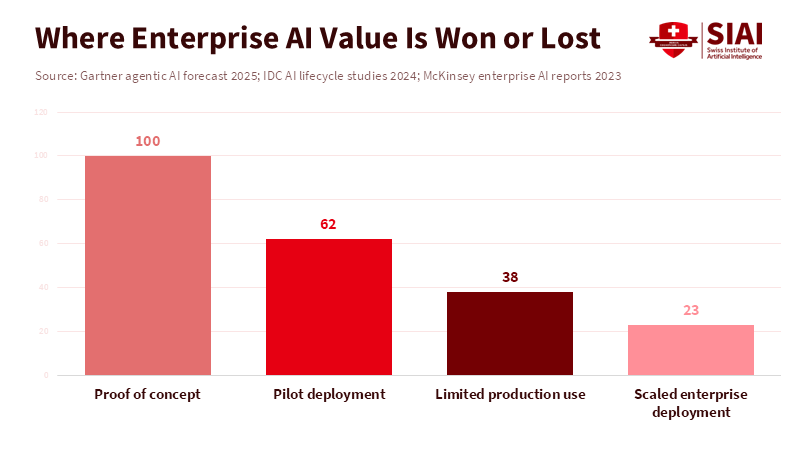

Enterprise artificial intelligence (AI) management is like a concealed competition. It determines if a business adds a pre-made chatbot or completely restructures its operations around a new kind of tech foundation. A trusted forecast suggests businesses will spend heavily on AI, with hundreds of billions in 2025. But there are warnings that many AI projects, almost half, might fail before they ever generate lasting value. These two facts, the huge potential spending and the risk of failure, change things. Big public displays, like Super Bowl ads, can improve brand image and pique customer interest. But they don't materially affect the checklists, integration plans, or compliance rules important to purchasing departments. The true competition isn't about who has the most amusing ad, but about who becomes the go-to management system for business processes. That's where the real control, consistent income, and long-term market dominance are established.

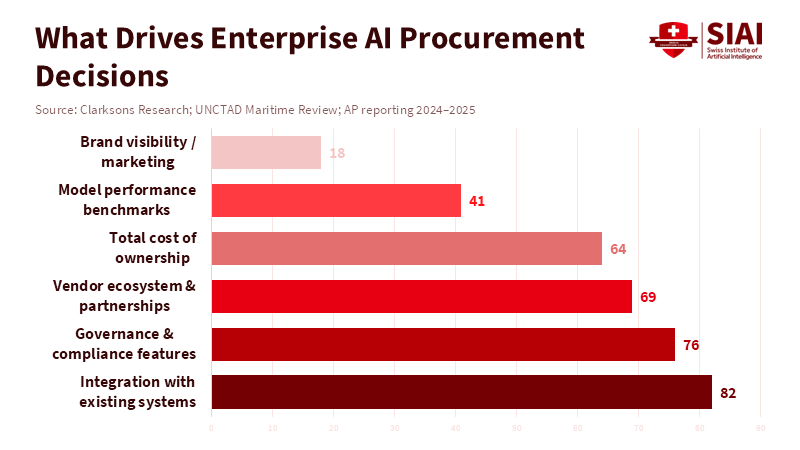

Where the Real Buying Power Lies: Enterprise AI Management

The discussions that matter most to tech chiefs and purchasing teams are very functional. What they care about includes connectors, audit trails, the risk of being locked into a single vendor, how quickly the system responds to key operations, and how much responsibility a vendor takes for AI errors. Another consideration is which vendor has the right relationships to integrate with systems such as SAP, Salesforce, Workday, and airline reservation platforms. Vendors who can supply solid connectors, role-driven access controls, and clear options for data storage will win deals. This isn't just a theory. Top-tier business platforms are already selling themselves as management systems. They glue AI models into workflows and connect them with a company's data resources. OpenAI's recent introduction of the Frontier platform, designed as a business-focused AI management product for early adopters, signals a shift from model-to-model competition to platform-to-platform competition.

Note that the buying process in large companies is long and complex. For every flashy ad campaign, there is a purchasing spreadsheet, a security review, and a test project. According to Workmate, testing phases vary widely depending on project complexity; simple pilot projects with clean data can progress to production within three to six months, whereas more complex systems may require nine to eighteen months to proceed. Many AI test programs never go into full operation. Studies show a significant difference between demos and systems that are actually ready for use. Gartner, for example, has warned that a large percentage of AI projects could be canceled by 2027 due to costs, unclear business benefits, and insufficient risk controls. This means that major spectacles don't influence decision-makers. They are influenced by ways to reduce risk. This includes vendor service agreements, the ability to observe how the system operates, a record of the model's origins, and a path to supervised production.

Ads Grab Attention but Don’t Drive Enterprise Decisions

Super Bowl ads are great at two things: they raise brand awareness quickly, and they create stories for investors—Anthropic’s ad campaign, which states that Ads are coming to AI. But not to Claude” forces a public debate about how to make money from AI and how to make sure it's trustworthy. It gets headlines and forces company representatives to answer questions on social media. But headlines and social media buzz don't really affect purchasing decisions. Companies don't give out contracts because a vendor ran a clever 30-second ad. They give them out because a platform lowers integration costs, reduces risk, and delivers a consistent return on investment over several months and years. News outlets covered this media battle, and the effect on consumer feelings is immediate. The effect on tech chiefs is small, if it is visible at all.

Think of enterprise agreements as moving along a different path than consumer choices. Sales processes depend on rules, vendor risk evaluations, and partner networks. If a vendor offers an admin layer that handles AI, integrates with business processes, enforces permissions, and creates audit trails, purchasing departments will usually choose that vendor, regardless of how good the Super Bowl ads were. That is why the best way to succeed in the business world is becoming less and less about having the best AI model. Instead, it's more about being the dependable application layer. When vendors refer to these new products as “AI coworkers” or “agent platforms,” they're directly addressing buyers' needs: buyers wanna replace fragile point solutions with components that are governed, interoperable, and live inside the company’s control plane.

The Numbers: Scale, Risk, and the Power of Partnerships

The overall stats are basic but show you a lot. The top research firms have somewhat different predictions, but they all agree on one thing: businesses will spend most of the money. IDC and similar research indicate that companies' AI budgets will reach hundreds of billions this year, and another major research firm predicted that total IT spending on AI will be in the trillions when infrastructure and software are included. To put it differently, the amount of money going into business AI is so large that even small changes in purchasing behavior can create big winners and losers. This money doesn't go to the company with the best ad; it goes to vendors that can fit into purchasing processes and spread the cost across many business units.

The risks and failure rates strengthen the point. Industry studies have found that most pilot programs end before they are effective. IDC and other observers share that most proofs of concept fail not because of model fit but because of data readiness, change management, integration debt, and governance failures. These failure modes are what an admin layer aims to fix. A platform that can reduce integration time, make outputs traceable, and handle administration at scale can turn test projects into ongoing business spending. That's how you turn a purchase win into long-term recurring revenue.

Vendor Strategy: Become the Go-To Admin Layer

For vendors, the plan is simple and calculated: secure positions within business structures where work flows across systems and where people must approve. That means making connectors to ERPs, CRMs, and data warehouses. It is about building governance hooks and role-based controls. Also, you want to offer dashboards that show from which each decision came and how confident you are in it. The reward is not only money. It also controls the data path and the chance pull platform rents via consistent fees and a marketplace. The vendor who becomes the go-to admin earns not only model-use spending but also a share of the business software stack. Proof of this move is everywhere: AI vendors are pitching their products as admin and management platforms and naming business clients as pilots.

This is also where major cloud providers and existing software vendors are important. A well-designed admin layer has to fit on top of cloud infrastructure and inside corporate identity systems. Cloud providers will compete by offering infrastructure and managed admin services. Also, large software companies will try to add agent features into their suites. Because of this, business buyers will prefer systems that fit with current purchasing flows and partner networks, not platforms that act as separate consumer services. The meaning for startups is blunt: the consolidation strategy playbook should be your product roadmap, not an afterthought for the marketing team.

Advice for Educators, Administrators, and Decision-makers

For educators and program leaders, the lesson is useful: The lesson teaches integration and governance skills, not just model tuning. A new group of AI managers needs to know how to build solid data pipelines, audit model outputs, and draft service agreements that unite vendors and buyers. Classes should emphasize the people-and-process side of AI use — things like change management, contract design, and rules — because these are the things that turn pilots into production. For administrators, the job is to change vendor selection criteria to reward observability, testability, and composability over marketing hype.

Policymakers should make sure purchases are predictable and auditable. If regulators require audit records and source data for high-risk decisions, vendors that already provide those capabilities will have a structural advantage. A policy that clarifies liability for automated decisions will make business buyers more willing to sign multi-year contracts with admin vendors that agree to clear responsibilities. That is the lever that moves spending from tests to recurring contracts. The policy opportunity is not in a regulation; it is in purchasing standards and liability frameworks.

Addressing the Obvious Concerns

One concern is that advertising still matters: brand trust has pull at boardrooms and stock markets. That’s right. A known brand reduces sales friction and helps attract talent. But brand doesn't replace contracts with audit rights, nor does it change the technical burden of connecting with a bank’s core ledger. Another worry is that models still matter: better models make better results. That’s also right. The extra value of a somewhat better model shrinks if the vendor can't run it within a company's control plane. The winner has to unite belief in effectiveness with long-lasting integration and governance.

Another concern is that hyperscalers will own the admin layer. They have size and relationships, but hyperscalers don't automatically gain trust in every business field. Things like banking, healthcare, and government all place rules that reward specialized admin and compliance features. That creates opportunities for vendors who unite field depth with platform interoperability. These aren't simply theoretical openings. They are visible in early partnerships and the naming of first-mover clients on recently announced platforms.

The Super Bowl ad dispute is a helpful story. It shows values and frames public debate, but it isn't how business budgets are chosen. Purchase teams vote with contracts, not with clicks. They give platform dominance to products that lower integration cost, handle risk, and supply observability. The destiny of enterprise AI will be chosen in engineering roadmaps, partner ecosystems, and governance contracts. Vendors who understand this will stop using marketing as a replacement for product engineering. They will build admin layers that become default paths for work. That’s the market that determines winners, not the halftime show. If policymakers, educators, and administrators want to shape good outcomes, they should act on the levers that matter to procurement: clearer standards, better training, and rules that reward auditable, composable, and secure orchestration. The risks are high. The money is moving. The quiet field is now the main one.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of the Swiss Institute of Artificial Intelligence (SIAI) or its affiliates.

References

AI Business (2026) Enterprises don’t care about Super Bowl ads. AI Business, February.

CNN (2026) Anthropic and OpenAI take their AI rivalry to the Super Bowl. CNN, 6 February.

Davenport, T.H. and Ronanki, R. (2018) ‘Artificial intelligence for the real world’, Harvard Business Review, 96(1), pp. 108–116.

Gartner (2025) Gartner predicts over 40% of agentic AI projects will be canceled by end of 2027. Gartner Press Release, June.

Gartner (2025) Worldwide artificial intelligence spending forecast. Gartner Research.

IDC (2024) IDC FutureScape: Worldwide AI and generative AI spending guide 2025–2028. International Data Corporation.

IDC (2024) Why enterprise AI pilots fail to scale. IDC Analyst Brief.

McKinsey Global Institute (2023) The economic potential of generative AI: The next productivity frontier. McKinsey & Company.

Reuters (2026) Anthropic buys Super Bowl ads in challenge to OpenAI’s monetization strategy. Reuters, February.

The Guardian (2026) AI chatbots: Anthropic and OpenAI go head to head as ads arrive. The Guardian, 7 February.

Similar Post

Narrowing the Flame: AI resource allocation and the practical prevention of wildfires

AI is changing wildfire management by prioritizing where human effort matters most, not by predicting fires. Human-in-the-loop systems cut response time and reduce ignition risk under tight resource limits. This shift turns wildfire control from emergency reaction into practical prevention.

The Impossible Orbit: Why orbital AI satellites are a coordination nightmare

A million satellites would overwhelm orbital coordination long before technical limits are reached Collision risk, debris, and governance failures scale faster than engineering solutions Without strict global control, orbital AI becomes a systemic liability, not progress

Under Glass: How Legal Battles and Platform Politics Rewired the mobile design regime

Mobile design is now governed, not just created This regime shapes how learning technologies function in schools Policy can still redirect design toward education

Back in 2012, Apple won a case where a jury awar

Maximizing Agentic AI Productivity: Why German Firms Must Move Beyond Adoption and Teach Machines to Act

Maximizing Agentic AI Productivity: Why German Firms Must Move Beyond Adoption and Teach Machines to Act

Published

Modified

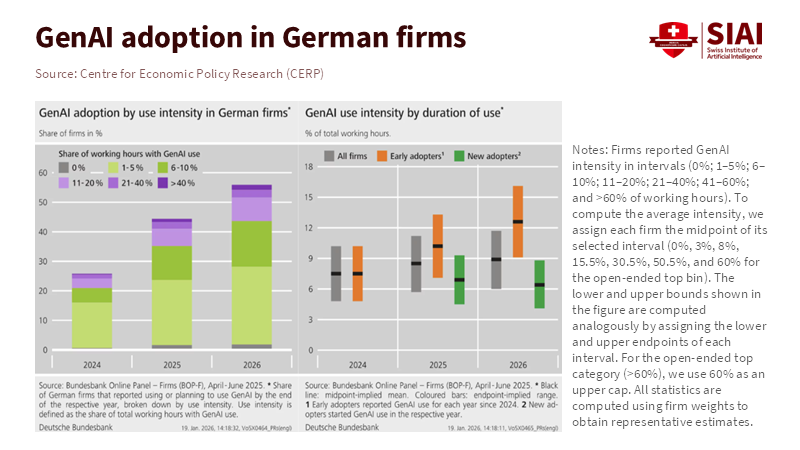

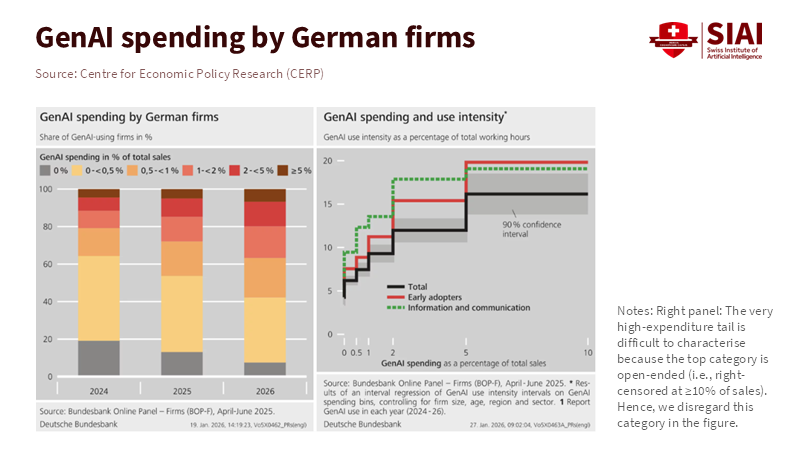

German firms adopted generative AI fast, but productivity gains are flattening The next phase is converting adoption into durable agentic AI productivity Education and policy must shift from tools to systems, governance, and measurement

It didn't take long for generative models to get into businesses. However, the speed at which they were accepted conceals something: the initial positive results aren't as strong as they were. When a company says it uses AI, it could mean anything. It could be a simple chatbot test or a complete system that plans, executes, and learns across various tasks. The important point is that the number of companies that moved past the test stage into routine AI use has increased significantly since 2023. However, the additional output per euro spent is lower than it used to be for those who started using it early on. This means we're not talking about whether we should use AI anymore; we're talking about turning it into something useful. We need to translate general use into something specific and ensure that experiments lead to lasting improvements. If schools, colleges, and politicians see all companies as the same, they won't recognize that companies that have already widely used AI need different kinds of support. They don't need more introductions, but they do need assistance with changing their systems, measuring results, and managing things. In this way, AI can deliver consistent benefits. We call this agentic AI productivity. It's about AI systems that not only create things but also act reliably in complex businesses, increasing output over time.

Agentic AI Productivity and How We Need to Think About It

We need to stop focusing on how many people are using AI and start focusing on the benefits and what businesses are learning. The number of people using generative tools is important because it indicates how many companies have adopted them. However, it doesn't say much about whether companies are getting real value. Evidence shows that many German companies are using it widely, but the performance gains for each additional euro spent are decreasing for companies that already use the tech. This is a sign of saturation: early users get the easy benefits, but getting more requires bigger changes.

This is important for education policy because the skills and support that helped develop rapid tests differ from those needed to scale AI systems. Workers need to know how to write prompts and keep data clean. Also, they need to know how to work with machines, measure AI performance, and ensure explicit guidelines and feedback. Teaching people to use the tools at a basic level won't change their productivity. The big challenge is teaching people to change how they work so that AI becomes a helpful partner that reduces waste, prevents mistakes, and increases what can be done across jobs.

Early tests focused on drafts, summaries, and prototypes. But using AI regularly needs measurements of process value, for example, shorter times across teams, fewer mistakes, and better decisions when things are uncertain. These things are harder to measure, but they're the only way to know if it's still worth spending more money. Companies that focus solely on basic metrics might report early gains that later fade. In short, using AI is now a basic requirement. What will make companies successful is measuring and managing AI productivity.

Proof of Saturation: Germany, Companies, and Returns

From 2023 to 2025, German companies moved quickly from experimenting to using AI regularly. National surveys show a big jump from low adoption in 2023 to much higher numbers the next year. But it's happening at two speeds: many companies report some use, but only a few are using it deeply and regularly.

Growth in the number of companies using AI is important. But independent reviews and company surveys show that the benefits aren't as high for those who started using AI early: spending more money doesn't yield as many gains as it did at first. This suggests that as companies use AI in many areas, the simple gains, like automating text, getting data, or making reports, are used up. Improving later requires changing jobs, combining models with work systems, and changing management.

The impact on policy is clear. If German companies reach a point where simply getting more people to adopt AI isn't enough, then the incentives that drive adoption are outdated. What's important is support for redesigning processes, public resources for measuring AI outcomes, and teaching people to think strategically and manage. These are big changes. They require time, cross-departmental leadership, and new courses in schools and colleges that teach how to set goals, define AI actions, and evaluate results. Without these things, greater use of AI will spread resources too thinly and increase costs without increasing real output.

Italy's Households and Slow Adoption: A Comparison

Compare the saturation in German companies with what's happening with households in Italy. Surveys in Italy show people know about AI systems but are slower to use them regularly, and even slower to use them in daily tasks. Surveys show that about 30% of adults have tried generative tools in the past year, but only a few use them monthly or in ways that change how they work or study.

Households face various challenges, including limited digital skills, restricted access to technology, and concerns about risks and privacy. This makes them careful about using AI. The result is a double contrast: companies adopt AI quickly and then reach a limit, while households adopt it slowly and may never use it properly without support.

For politicians and teachers, the Italian example shows that more access doesn't mean more productive use. For households, learning should start with basic skills and progress to understanding how to work with AI. Schools and adult learning programs need to teach people how to manage AI tools, check results, and make ethical decisions about what to allow AI to do. If not, the social gap will widen: companies that use AI will gain a competitive advantage, while households and small companies that rely on them will fall behind, and inequality will worsen.

From Tests to Systems: What Companies and Schools Must Teach

Turning AI into real gains needs five changes inside companies. Each change affects how teachers train workers. First, move from knowing how to use tools to knowing how to design together. Create workflows where AI and people share goals and feedback. Second, include measurement in how things are done. Focus on process results, not just output numbers. Third, allocate time to management and testing to ensure AI behaves reliably in new situations. Fourth, focus on data and protected links so models can act with accurate information. Fifth, create roles for people who turn strategy into AI instructions. These changes are as much about teaching as about tech. They require courses that combine tech with business design, people skills, and ethics.

Schools and universities need to change quickly. They need to offer courses that teach how to write goals, measure the effects of AI actions, and set limits for AI. Teaching needs to be hands-on: learners should design and run tests that measure gains from small changes. Short programs should teach managers to recognize when increased spending isn't worth it. Also, government policy can help by funding partnerships that enable small businesses to measure results and by supporting benchmarks that let firms compare AI outcomes without disclosing private data.

Addressing Criticisms and Providing Rebuttals

Two common criticisms will come up. First, critics will argue it's too early to discuss saturation because many firms still don't use AI. This is true overall; many small companies are behind. But the policy needs to differ across firms. A general push to increase use misses the firms that need change. Second, some will say that AI is unsafe and we should limit it. That's a valid worry, and it supports our main point. If AI is to operate autonomously, management, testing, and measurement are essential. Stopping AI is not the answer. A better policy supports safe use by funding research, requiring reporting of problems, and promoting test environments in which AI behaviors can be tested before widespread use. Evidence shows that regulators and companies can work together to create standards that allow safe use while protecting people.

Another argument is about how we're measuring things. Critics will say that surveys can't capture long-term results, so claims about benefits are just guesses. That's a fair point. Our view is that the available evidence suggests that benefits decrease after a quick start. We support that claim with different data, along with caution. In cases where direct measurements are missing, policy should concentrate on improving measurement rather than giving large, untargeted support. Companies and governments need to agree on measurements for AI outcomes so we can judge investments.

We are at a turning point. The first push of generative tools changed what companies tried. The next must change how they work. The key is not whether a firm uses AI but whether it can get benefits as investments increase. That's what we mean by AI productivity: systems that act, learn, and improve in ways that raise output for each euro spent. German firms are showing signs of saturation. Italian households show slow adoption. Both facts point to the same conclusion: stop treating use as the goal. Support measurement, management, business design, and teaching about how humans and machines work together. Those are the things that will turn experiments into permanent value. Time is short, and the choice is clear. Support organizations and teach the skills that enable AI to improve work rather than break it up. Doing nothing will give us more tools, more noise, and smaller gains for the same cost.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of the Swiss Institute of Artificial Intelligence (SIAI) or its affiliates.

References

Bank for International Settlements (2025) Exploring household adoption and usage of generative AI: New evidence from Italy. BIS Working Papers, No. 1298.

Centre for Economic Policy Research (2025) Generative AI in German firms: diffusion, costs and expected economic effects. VoxEU Column.

Eurostat (2025) Digital economy and society statistics: use of artificial intelligence by households and enterprises. European Commission Statistical Database.

Gambacorta, L., Jappelli, T. and Oliviero, T. (2025) Generative AI, firm behaviour, diffusion costs and expected economic effects. SSRN Working Paper.

Organisation for Economic Co-operation and Development (2024) Artificial Intelligence Review of Germany. OECD Publishing.