How China Rare Earth Export Controls Became the New Tariffs

China quietly rations rare earths through strict export paperwork, not formal bans Its dominance in mining and magnet production turns bureaucracy into global leverage Schools and policymakers must plan for lasting supply risk and build alternative sources

Beyond Digital Plantations: Confronting AI Data Colonialism in Global Education

Beyond Digital Plantations: Confronting AI Data Colonialism in Global Education

Published

Modified

AI data colonialism exploits hidden Global South data workers Education can resist by demanding fair, learning-centered AI work Institutions must expose this labor and push for just AI

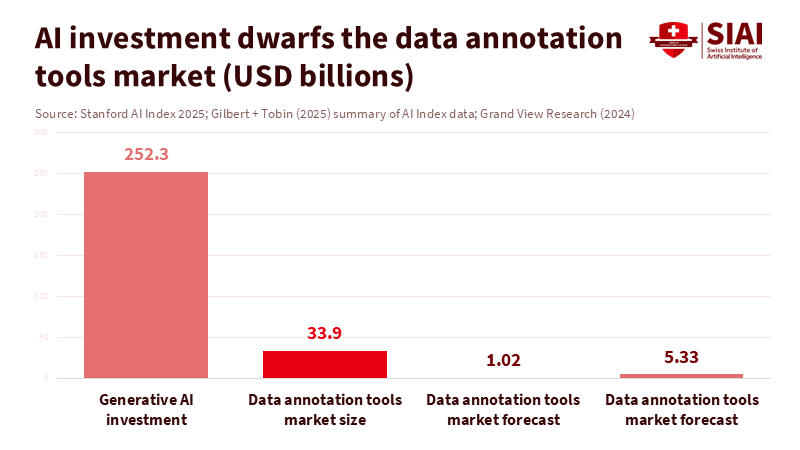

Currently, between 154 million and 435 million people perform the “invisible” tasks that train and manage modern AI systems. They clean up harmful content, label images, and rank model outputs, often earning just a few dollars a day. Meanwhile, private investment in AI reached about $252 billion in 2024, with $33.9 billion directed toward generative AI alone. The global generative AI market is already valued at over $21 billion. One data-labeling company, Scale AI, is worth around $29 billion following a single deal with Meta. This disparity isn’t an unfortunate side effect of innovation; it is the business model. This is AI data colonialism: a digital plantation economy in which workers in the Global South provide inexpensive human intelligence, enabling institutions in the Global North to claim “smart” systems. Education systems are right in the middle of this exchange. The crucial question is whether they will continue to support it or help dismantle it.

AI data colonialism and the new digital plantations

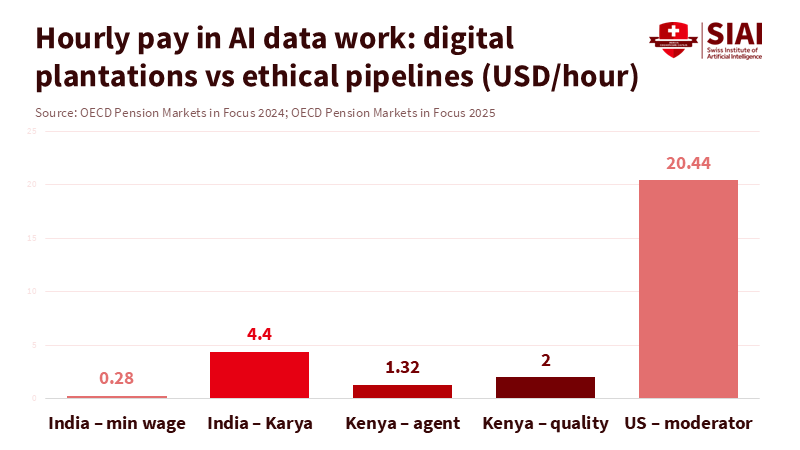

AI data colonialism is more than just a catchphrase. It describes a clear pattern. Profits and decision-making power gather in a few companies and universities that own models, chips, and cloud services. The “raw material” of AI—labeled data and safety work—comes from a scattered workforce of contractors, students, and graduates, many from lower-income countries. Recent studies suggest that this data-labor force is already as large as the entire national labor market, yet most workers earn poverty-level wages. In Kenya and Argentina, data workers who moderate and label content for major AI companies earn about $1.70 to $2 per hour. In contrast, similar jobs in the United States start at roughly $18 per hour. Countries are effectively exporting human judgment and emotional resilience rather than crops or minerals. They import AI systems built on that labor at a premium.

Working conditions in this digital plantation mirror those of the old ones, though they are hidden behind screens. Reports detail workers in Colombia and across Africa handling 700 to 1,000 pieces of violent or sexual content per shift, with only 7 to 12 seconds to decide on each case. Some workers report putting in 18 to 20-hour days, facing intense surveillance and unpaid overtime. A recent study by Equidem and IHRB reveals widespread violations of international labor standards, from low wages to blocked union organizing. A TIME investigation found that outsourced Kenyan workers were earning under $2 per hour to detoxify a major chatbot. New safety protocols introduced in 2024 and 2025 aim to address these issues. However, 81% of content moderators surveyed say their mental health support is still lacking. This situation is not an error in the AI economy; it is how value is extracted. Education systems—ranging from universities to boot camps—are quietly funneling students and graduates directly into this pipeline.

From AI data colonialism to learning-centered pipelines

If we accept the current model, AI data colonialism becomes the default “entry-level job” for a generation of learners in the Global South. Many of these workers are overqualified. Scale AI’s own Outlier subsidiary reports that 87% of its workforce holds college degrees, while 12% have PhDs. These jobs are not casual side gigs; they are survival jobs for graduates unable to find stable work in their fields. Yet their tasks are treated as disposable piecework rather than as part of a structured learning journey into AI engineering, policy, or research. For education, this is a massive waste. It parallels training agronomy students, only to have them pick cotton indefinitely for export.

There is evidence that a different model can work. In India, Karya provides data services that pay workers around $5 per hour—nearly 20 times the minimum wage—and offers royalties when datasets are resold. Karya reports positive impacts for over 100,000 workers and aims to reach millions more. Projects like this demonstrate that data work can connect to income mobility and skill development instead of relying on exploitative contracts. Simultaneously, the market for data annotation tools is growing rapidly, from about $1 billion in 2023 to a projected $5.3 billion by 2030, while the global generative AI market could increase eightfold by 2034. The funding exists. The challenge is whether educational institutions will ensure that data work linked to their programs is structured as meaningful employment focused on learning rather than as digital labor.

Institutions that can break AI data colonialism

Education systems have more power over AI data colonialism than they realize. Universities, vocational colleges, and online education platforms already collaborate with tech companies for internships, research grants, and practical projects. Many inadvertently direct students into annotation, evaluation, and safety tasks framed as valuable experience, often lacking transparency about working conditions. Instead of viewing these roles as a cost-effective way to demonstrate industry relevance, institutions can turn the model on its head. Any student or graduate engaged in data work through an educational program deserves clear rights: a living wage in their local context, limits on exposure to harmful content, mental health support, and a guaranteed path to more skilled roles. This work should count toward formal credit and recognized qualifications, not just as “extra practice.”

Some institutions in the Global South are already heading in this direction through AI equity labs, community data trusts, and cooperative platforms. They design projects where local workers create datasets in their languages and based on their priorities—such as agricultural support, local climate risks, or indigenous knowledge—while gaining technical and governance skills. A cooperative approach, as advocated by proponents of platform cooperativism in Africa, would allow workers to share in the surplus generated by the data they produce. For education providers, this means shifting the concept of “industry partnership” away from outsourcing student moderation and toward building locally owned shared AI infrastructure. That change transforms the dynamic: instead of training students to serve distant platforms, institutions can empower them to become stewards of their own data economies.

Educating against AI data colonialism, not around it

Policymakers often claim that data-intensive jobs are short-term, since automation will soon eliminate the need for human labelers. However, current investment trends indicate otherwise. Corporate AI spending hit $252.3 billion in 2024, with private investment in generative AI reaching $33.9 billion—more than eight times the levels of 2022. The generative AI market, worth $21.3 billion in 2024, is expected to grow to around $177 billion by 2034. The demand for high-quality, culturally relevant data is rising alongside it. Annotation tools and services are also on a similar growth trajectory. Even if some tasks become automated, human labor will remain essential for safety, evaluation, and localization. Assuming that AI data colonialism will disappear on its own simply allows the current model another decade to solidify.

Regulators are beginning to respond, but education policy has not kept up. New frameworks, such as the EU Platform Work Directive, create a presumption of employment for many platform workers and require algorithmic transparency. A global trade union alliance for content moderators has established international safety protocols, advocating for living wages, capped exposure to graphic content, and strong mental health protections. Lawsuits from Kenya to Ghana demonstrate that courts recognize psychological injury as genuine workplace harm. Education ministries and accreditation bodies can build on this momentum by requiring any AI vendor used in classrooms or campuses to disclose how its training data was labeled, the conditions under which it was done, and whether protections for workers meet emerging global standards. Public funding and partnerships should then be linked to clear labor criteria, similar to requirements many already impose for environmental or data privacy compliance.

For educators and administrators, tackling AI data colonialism also means incorporating the labor that supports AI into the curriculum. Students studying computer science, education, business, and social sciences should learn how data supply chains operate, who handles the labeling, and what conditions those workers face. Case studies can showcase both abusive practices and fairer models, like Karya’s earn–learn–grow pathways. Teacher training programs should address how to discuss AI tools with students honestly—not as magical solutions, but as systems built on hidden human labor. This transparency prepares graduates to design, procure, and regulate AI with labor justice in focus. It also empowers students in the Global South to see themselves not just as workers in the digital realm, but as future builders of alternative infrastructures.

The plantation metaphor is uncomfortable, but it effectively highlights the scale of imbalance. On one side, hundreds of millions of workers, often young and well-educated, perform repetitive, psychologically demanding tasks for just a few dollars an hour and without real career advancement. On the other side, a small group of firms and elite institutions accumulate multibillion-dollar valuations and control the direction of AI. This represents the essence of AI data colonialism. Education systems can either normalize it by quietly channeling students into digital piecework and celebrating any AI partnership as progress, or they can challenge it. This requires implementing strict labor standards, investing in cooperative and locally governed data projects, and teaching students to view themselves as rights-bearing professionals rather than anonymous annotators. If we fail, the history of AI will resemble a new chapter of familiar exploitation. If we succeed, education can transform today's digital plantations into tomorrow's laboratories for a fairer, decolonized AI economy.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of the Swiss Institute of Artificial Intelligence (SIAI) or its affiliates.

References

Abedin, E. (2025). Content moderation is a new factory floor of exploitation – labour protections must catch up. Institute for Human Rights and Business.

Du, M., & Okolo, C. T. (2025). Reimagining the future of data and AI labor in the Global South. Brookings Institution.

Grand View Research. (2025). Data annotation tools market size, share & trends report, 2024–2030.

Okolo, C. T., & Tano, M. (2024). Moving toward truly responsible AI development in the global AI market. Brookings Institution.

Reset.org (L. O’Sullivan). (2025). “Magic” AI is exploiting data labour in the Global South – but resistance is happening.

Startup Booted. (2025). Scale AI valuation explained: From startup to $29B giant.

Stanford HAI. (2025). 2025 AI Index report: Economy chapter.

TIME. (2023). Exclusive: OpenAI used Kenyan workers on less than $2 per hour to make ChatGPT less toxic.

UNI Global Union / TIME. (2025). Exclusive: New global safety standards aim to protect AI’s most traumatized workers.

Karya. (2023–2024). Karya impact overview and worker compensation.

Similar Post

EU Digital Regulation Simplification and Europe’s Missing Tech Decade

EU digital regulation simplification will shape Europe’s tech power Fragmented rules now act as an uncertainty tax on digital innovators The article urges clear, one-stop rules that turn high standards into an advantage.

Actuarial Neutrality and Early Retirement: Why Public Finance Cannot Ignore the Capital Base

The article expands actuarial neutrality from pensions to the whole public budget It shows how early retirement weakens tax revenues, pension capital, and debt sustainability It urges linking retirement-age rules to public-finance neutrality while protecting vulnerable workers

China Tariff Transshipment and the Illusion of Decoupling

US tariffs pushed Chinese exports through Southeast Asia instead of stopping them Factories and supply chains there still rely heavily on China Trade policy must target real value chains, not just flags on shipping labels

V

The H-1B Talent Pipeline Is Breaking Before Our Eyes

The US relies on an H-1B talent pipeline its schools cannot replace New H-1B fees push global talent toward rival countries building strong education–innovation systems Without opening visas and fixing public education, the US will lose its tech edge

Dual-Use Defense R&D: Why Markets Are Really Paying for Immediate Demand

Rearmament budgets and backlogs—not civilian optionality—drove the valuation surge Dual-use R&D earns its premium by de-risking delivery and scaling deployable systems Policy should fund deployability, embed compliance, and protect targeted spillovers

The New Tripod? Why a Germany-Japan Security Alliance Won’t Repeat the 1930s

Military spending is surging, and the Russia–China axis tightens A Germany–Japan security alliance is lawful, networked, and unlike the 1930s Link logistics, energy, and industry to turn budgets into credible deterrence

Rethinking the Green Bond Premium in Sovereign Debt

The sovereign green bond premium is tiny and unstable Real value comes from standards, disclosure, and crowding-in private capital Treat the greenium as a signal; cut project risk with credible frameworks and predictable pipelines

AI Industrial Policy Is National Security

AI Industrial Policy Is National Security

Published

Modified

Treat compute and clean power as strategic infrastructure for national security. Crowd in private capital with compute purchase agreements, capacity credits, and loan guarantees Tie support to open access, safety standards, and allied coordination as China accelerates

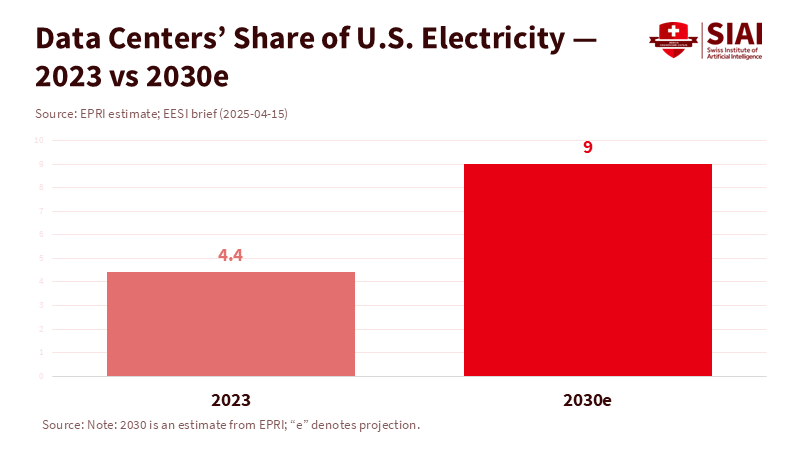

The number to pay attention to is nine. By 2030, U.S. data centers might use up to 9% of all U.S. electricity, mainly due to AI workloads. This estimate from the Electric Power Research Institute reshapes the current discussion. It’s not just about private companies securing enough funding for chips and clusters anymore. This challenge affects energy, manufacturing, and national defense. An AI industrial policy that overlooks power supply, production capacity, and fair access will not succeed. Meanwhile, China’s AI stack is advancing rapidly, from local accelerators to chatbots reaching a global audience. Although markets are thriving now, skeptical headlines could deter investment tomorrow. If we view computing as a private luxury, we invite instability. If we see it as strategic infrastructure, we can build capacity, maintain predictable prices, and set standards that benefit the public and the technological advantage of free nations.

AI industrial policy as a national security initiative

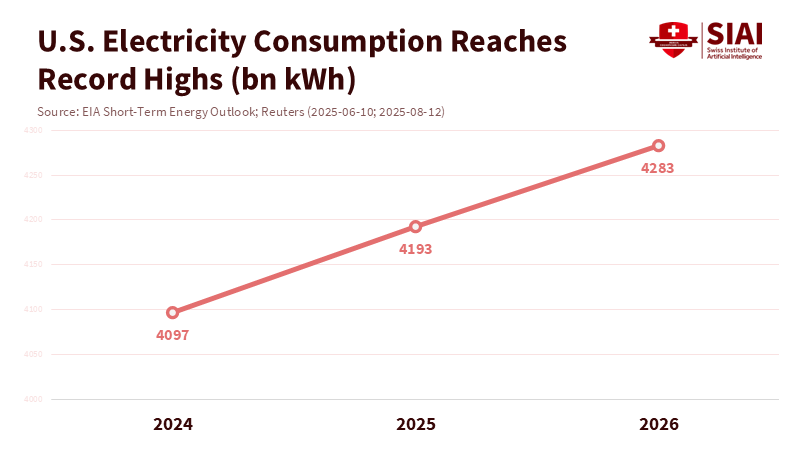

The United States must view advanced computing and its power supply as strategic resources essential for national security. The analogy is not glamorous, but it is relevant: in the 19th century, railroads determined great-power contests; in the 21st century, computing and energy will do the same. Recent policies hint at this change. Washington imposed stricter export controls on high-end AI chips and tools in 2024 and 2025 to limit military transfers and safeguard its lead in training-class semiconductors. At the same time, federal energy forecasts predict record-high electricity demand in 2025 and 2026, with AI-heavy data centers as a significant driver. These signs indicate national security is at stake. The competition is not only about model performance; it’s about supply chains, price stability, and access during crises. In this environment, AI industrial policy becomes the backbone that coordinates interests across computing, energy, and financial markets.

The next step is to ensure that security and openness work together through large-scale planning. Public funding should come with public responsibilities: fair access to critical computing resources, reasonable prices, and resilience. This idea is already reflected in the National AI Research Resource pilot, which provides researchers with computing power and data that they would not otherwise have access to. It also supports industrial grants to restore advanced chip production capacity. However, the current approach lacks the necessary scale and scope. A few grants and a research pilot will not be sufficient as workloads and energy requirements increase. AI industrial policy needs to function at the level of a comprehensive industrial expansion. This requires planning for gigawatts of stable, clean power, petaflops to exaflops of easily accessible computing, and financing that can withstand market fluctuations.

China’s rapid progress makes delay a costly option. Huawei is sending out new Ascend accelerators that domestic buyers consider credible alternatives. At the same time, DeepSeek has demonstrated how an affordable, rapidly evolving model can disrupt markets and perceptions. Western officials have raised alarms about national security threats posed by model use and the risk of chips slipping through control measures. The goal is not to incite panic but to strive for parity. Without a careful, security-focused AI industrial policy, the U.S. risks losing ground as competitors quickly integrate models across government and industry.

AI industrial policy must fund power and computing together

Policy cannot separate computing from the power that supports it. The International Energy Agency predicts global electricity demand from data centers could more than double, reaching around 945 TWh by 2030, with AI being the key factor. In the U.S., federal analysis indicates that total power consumption will hit new highs in 2025 and 2026, with data centers as a major contributor. Suppose computing power expands, but energy supply does not. In that case, prices will rise, installations will slow, and projects will become concentrated in a few locations with weak transmission capabilities. This presents a national risk. A serious AI industrial policy must connect three key elements: grid capacity, long-term procurement of clean power, and the placement of computing resources close to reliable transmission lines.

A financing model is already visible. The Loan Programs Office has used federal credit to restart large-scale, emission-free energy generation, such as the Palisades nuclear plant, through an infrastructure reinvestment program. That same approach can support grid-improving investments, nuclear restarts, upgrades, and long-gestation clean energy projects that can help AI facilities. By combining this with standardized, government-backed power purchase agreements for “AI-capable” megawatts, developers can secure lower-cost financing. On the computing side, we should increase open-access resources through a Strategic Compute Reserve that buys training-class time the same way the government purchases satellite bandwidth. This reserved capacity would be allocated to defense-critical, safety-critical, and open-research needs through clear, competitive rules. The U.S. does not need to own the clusters. Still, it requires reliable, reasonably priced access to them, especially in emergencies.

The numbers support this approach. McKinsey estimates that AI-ready data centers will require around $5.2 trillion in global investment by 2030, with over 40% of that likely to occur in the U.S. The government cannot and should not cover that entire amount. However, it can encourage private investment and address coordination challenges that markets struggle with, like long transmission timelines, uneven connection queues, and volatile power prices. Some federal guarantees, standardized contracts, and risk-sharing strategies will lower borrowing costs, stabilize construction cycles, and keep capacity operational when credit tightens.

AI industrial policy that encourages private investment—even amidst bubble fears

Today, the markets are enthusiastic. Hyperscalers plan to invest tens of billions annually, and chip manufacturers are facing multi-year backlogs. Yet, the forward trend is not guaranteed. Prominent warnings about an “AI bubble” highlight a simple reality: while the long-term narrative may hold, short-term funding cycles can create issues. A few setbacks or economic shocks could slow equity issuance and prompt lenders to withdraw. AI industrial policy must account for this potential. The solution isn’t to issue blank checks; it is to create smart, conditional, counter-cyclical safeguards that prevent key projects from stalling at critical moments.

Three strategies could help. First, compute purchase agreements: long-term federal contracts for training hours and inference capacity with qualified suppliers that meet strict security, privacy, and openness standards. These agreements would secure revenue, reduce financing costs, and prevent favoritism by focusing on delivered capacity and compliance instead of company identities. Second, capacity credits for reliable, clean energy serving certified data centers could be modeled after clean-energy tax credits but linked to reliability and location value. Third, loss-sharing loan guarantees for infrastructure near the grid—such as substations, transmission lines, and high-voltage connections—that private companies under-invest in due to the broad benefits they provide to many users.

We can see how public financing can stimulate private investment without complete nationalization. When Washington provided Intel with a mix of grants and loans through the CHIPS and Science Act, it didn’t acquire equity. It reduced the risks associated with U.S. manufacturing. Following the earnings reports, the company showcased expedited U.S. funding alongside private contributions from global partners. That’s the model: public investment for public benefit, executed by the private sector under enforceable terms. If equity markets fluctuate, the policy cushion keeps progress steady. If they rise, federal exposure remains minimal. In every scenario, AI industrial policy maintains strategic momentum.

Concerns about market excesses must be weighed against real demand. The IEA forecasts that AI-optimized data center energy consumption could quadruple by 2030. U.S. electricity usage is already nearing record highs. Microsoft alone anticipates around $80 billion in AI-driven data center investments for fiscal 2025, with other companies indicating similar trends. Bubble discussions will come and go, but the underlying forces—shifting workflows towards AI assistants, code generation, scientific simulations, and AI-intensive services—are persistent. Policy should lean into this reality by facilitating financing and linking support to openness and security.

AI industrial policy for open access, collaborative deterrence, and standards

Security goes beyond just keeping adversaries away from our technology. It also involves ensuring that crucial computing resources are accessible to innovators, universities, and small businesses that drive the ecosystem. The NAIRR pilot has validated the concept at a research level; now we should expand this idea into a long-term, well-supported facility. An enhanced NAIRR could serve as the public access point to the Strategic Compute Reserve, with strict eligibility criteria, audit processes, and privacy guidelines. Access would come with responsibilities: publishing safety findings, supporting red-team evaluations, and contributing to guidelines for responsible use.

International policy should follow that approach. The U.S. has tightened export restrictions on training-class accelerators and tools, while also rallying allies to close loopholes. Simultaneously, China’s rapid development highlights how quickly cost-effective models can proliferate and how difficult it is to regulate their downstream applications. Concerns have been raised about censorship, data management, and possible military associations. The best response isn’t isolation; it’s forming a trusted-compute network among allies that establishes shared procurement standards, security benchmarks, and auditing requirements for technology and models. If standards are met, access to allied markets, interconnections, and public contracts is granted. If not, it is denied. This strategy turns openness into an asset and positions standards as a form of deterrence.

Finally, openness must encompass markets, not just research labs. Public funding should ensure fair access to essential infrastructure. Contracts for publicly funded technology and its energy should contain favorable access conditions, set aside capacity for research and startups, and include clear rules against self-preferencing. History shows us that when public funds create private infrastructure, the public must receive more than just ceremonial gestures. It must have access that fosters competition.

Returning to the number nine. Suppose data centers expect to account for 9% of U.S. electricity by 2030. In that case, computing is already a national infrastructure, not a niche sector. We cannot support this infrastructure based on short-term trends or leave it at the mercy of energy constraints and inconsistent standards. A clear AI industrial policy offers a balanced solution. It supports robust, clean power and accessible computing together. It encourages private investment through purchase agreements, credits, and guarantees that might be modest in budget size but significant in impact. It links support to openness, security, and cooperation among allies. Finally, it prepares for funding shifts without interrupting strategic growth. China’s swift advances will not wait. Markets will not always be favorable. The grid will not self-correct. The United States needs to act now—before shortages define the conditions—to ensure computing is reliable, affordable, and accessible. Because in this competition, capacity shapes policy, and policy ensures security.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of the Swiss Institute of Artificial Intelligence (SIAI) or its affiliates.

References

Associated Press. (2024, May 27 May 27). Elon Musk’s xAI says it has raised $6 billion to develop artificial intelligence.

Biden White House. (2024, March 20 March 20). Fact sheet: President Biden announces up to $8.5 billion preliminary agreement with Intel under the CHIPS & Science Act.

Brookings Institution — Wheeler, T. (2025, November 18 November 18). OpenAI floats federal support for AI infrastructure—what should the public expect?

Energy.gov (U.S. Department of Energy, Grid Deployment Office). Clean energy resources to meet data center electricity demand. (n.d.).

Energy.gov (U.S. Department of Energy, Loan Programs Office). Holtec Palisades. (n.d.).

Holtec International. (2024, September 30 September 30). Holtec closes $1.52B DOE loan to restart Palisades.

International Energy Agency. (2025, April 10 April 10). AI is set to drive surging electricity demand from data centres…

International Energy Agency. (2024). Energy & AI: Energy demand from AI.

Investing.com. (2025, October 23 October 23). Earnings call transcript: Intel Q3 2025 beats expectations.

Le Monde. (2025, February 24 February 24). Artificial intelligence: China’s ‘DeepSeek moment’.

McKinsey & Company. (2025, April 28 April 28). The cost of computing: A $7 trillion race to scale data centers.

McKinsey & Company. (2025, October 7 October 7). The data center dividend.

National Science Foundation. (2024). National AI Research Resource (NAIRR) pilot overview.

National Science Foundation / White House OSTP. (2024, May 6 May 6). Readout: OSTP-NSF event on opportunities at the AI research frontier.

Reuters. (2024, March 29 March 29). U.S. updates export curbs on AI chips and tools to China.

Reuters. (2025, January 13 January 13). U.S. tightens its grip on global AI chip flows.

Reuters. (2025, April 22 April 22). Huawei readies new AI chip for mass shipment as China seeks Nvidia alternatives.

Reuters. (2025, June 10 June 10). Data center demand to push U.S. power use to record highs in 2025, ’26, EIA says.

Reuters. (2025, September 9September 9). U.S. power use is expected to reach record highs in 2025 and 2026, EIA says.

Reuters. (2025, November 18 November 18). No firm is immune if the AI bubble bursts, Google CEO tells BBC.

TIME. (2024, January 26 January 26). The U.S. just took a crucial step toward democratizing AI access (NAIRR pilot).

U.S. Department of Energy (press release). (2025, September 16 September 16). DOE approves sixth loan disbursement to restart Palisades nuclear plant.

Utility Dive. (2024, March 27 March 27). DOE makes $1.5 conditional loan commitment to help Holtec restart Palisades.

Yahoo Finance. (2025, October 24 October 24). Intel’s Q3 earnings surge following U.S. government investment.