Unshackling the Taboo: How Japan arms exports will redraw Asia’s strategic map

Japan arms exports end an 80-year taboo and create a major strategic inflection The shift combines legal change, record budgets, and political mandate—money meets law Tokyo must pair exports with strict controls or risk a regional arms spiral

Building-level Energy Optimization: the Cheap, Fast fix Europe Keeps Ignoring

Building-level energy optimization is the fastest, cheapest path to energy resilience Predictive analytics now enables precise, meter-level energy savings The main barrier is governance and implementation, not technology

Section 702 Reform and the AI Race: Securing Privacy Without Losing the Future

Section 702 reform must protect privacy and security AI competitiveness does not require unchecked surveillance Clear legal limits can strengthen trust and innovation

Reports from 2023 and watchdog groups indicate that U.S.

The AI Labor Divide: Who Wins, Who Survives, and Who Falls Away

The AI Labor Divide: Who Wins, Who Survives, and Who Falls Away

Published

Modified

AI is creating a sharp labor divide between capital owners, stable workers, and those being pushed out Education policy must adapt to this new AI labor divide or risk permanent inequality Public finance and schooling must evolve together to prevent economic exclusion

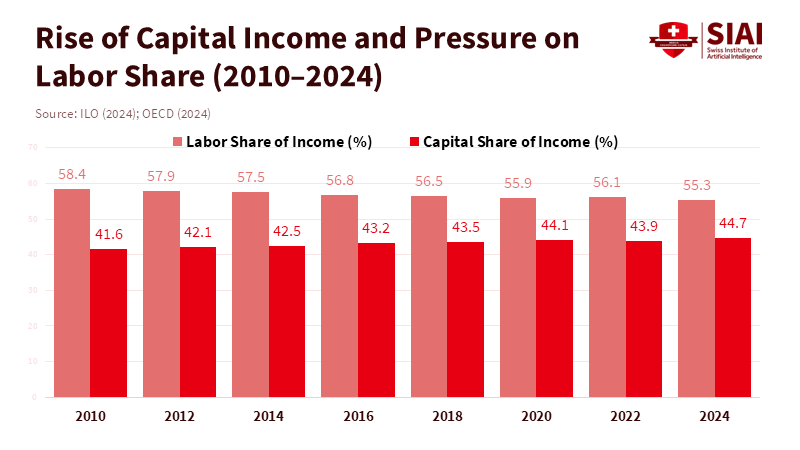

Artificial intelligence is not just changing tasks; it is altering who earns a living at scale. The clearest early pattern — and the one that should steer policy now — is an emerging AI labor divide: a thin stratum of owners and integrators captures most gains, a broad middle manages to keep pace, and a shrinking tail loses both jobs and wage share. That pattern already maps to capital income rising while labor’s share stalls or falls. This is not a far scenario. Recent cross-country and policy analyses show the same tilt: corporate profits and returns to capital are growing faster than wages, automation is reallocating work inside firms rather than creating broad new demand for labor, and public-finance models now treat taxing AI-driven capital as a core fiscal question. If teachers and lawmakers treat AI as a productivity puzzle rather than a redistribution problem, we will miss the moment to shape schools, tax systems, and safety nets to preserve social and civic functioning.

Understanding the AI Job Divide: The Rich, the Okay, and the Poor

The first thing to understand is that the way our economy is set up is dividing people into three groups that are likely to stick around for a while. At the top are the have-lots—the people who own the best AI technology, big internet platforms, and the investment funds that pay for automation. They make a lot of money because AI replaces workers, making it easier to grow their businesses. Below them are the haves—workers and small businesses who still do work that's valuable enough to keep their pay steady, or whose services are made better by AI, which helps them stay in business. At the bottom are the have-nots—workers whose jobs are easy to automate or who work in areas where there's less and less demand. These people are making less and less money.

It's important to understand this three-part picture because it clarifies where we need to step in. It's not only about retraining people. It's also about changing our tax system, creating jobs, and establishing support systems to prevent money from continuing to flow to those who already have it. We're starting to see evidence that this is really happening. A report from Axios notes that although organizations invested between $30 billion and $40 billion in generative AI, 95% saw no return on their investments, highlighting that AI-generated wealth is largely concentrated among a small number of successful companies. Financial analysis has documented similar trends in the growth of corporate profits and the decline in workers' incomes over the same period.

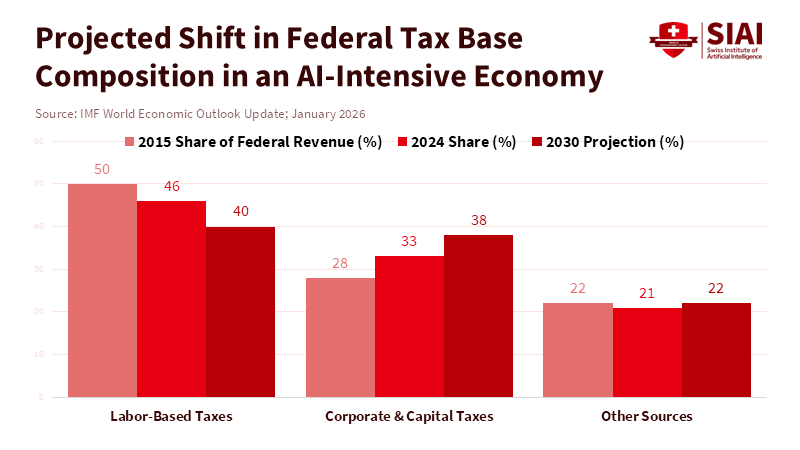

On the government policy side, new studies examine how government finance might function in a world with AI. They're considering treating AI systems and computing power as new resources we can tax and suggesting ways to get some of the money flowing to the people who own AI. These aren't merely opinions; they reflect real changes in how income is distributed and how companies generate revenue.

In numerical terms, many data collections indicate that the share of national income going to workers has been under pressure for years. And now AI is intensifying that pressure. International organizations report that workers' incomes have not returned to pre-pandemic levels, and companies' profits are increasing even when economic growth is modest. The exact numbers vary by country and measurement, but the trend is clear: AI is amplifying a trend that began in the late 20th century. This is important for schools to understand because the nature and extent of job losses will determine what schools need to teach and the types of support programs required.

What This Means for Schools, Credentials, and What We Teach

If this divide created by AI worsens, schools will face two challenges. First, they need to prevent people who are pushed into the have-nots category from being permanently stuck there. Second, they need to change how we issue credentials and create career paths so the haves can keep making money without making it too hard for others to earn the credentials they need. This means we need to stop thinking only about future skills and start thinking about a mix of skills, ways to redistribute money, and changes to our institutions.

Skills are still important. Skills such as solving complex problems, understanding digital technology, and adapting to different situations will help many workers remain useful alongside AI. But skills alone aren't going to solve the main money and distribution problem. If most of the money from increased productivity goes to the people who own investments, then the economy can grow while most people's standard of living stays the same.

What this means for what we teach is that we should include four things. First, we need to connect technical training to the real world of economics. We need to teach people how AI makes money and who gets that money so that they understand how internet platforms and business models work, not just how to write code. Second, we need to make credentials that are modular and stackable the norm. This means creating shorter, validated paths aligned with employers' needs, reducing the time and cost of re-entering the job market. Third, we need to invest in teaching methods that combine human decision-making, oversight, and ethics. These are areas where humans are still valuable in supervising and correcting what AI does. Fourth, we need to expand career transition programs that are connected to community colleges and regional groups. These programs should connect displaced workers with growing industries in their local area rather than focusing solely on the national job market.

There's also a fairness issue here. Not all areas have the same ability to help displaced workers find new jobs. Some areas where the have-nots are concentrated might keep most of the opportunities for themselves. This means the federal government and charitable organizations need to provide more support for regional retraining centers and make it easier to transfer credentials between states. It also means we need to ensure that education funding is flexible, with measures such as tax credits for training, income-based microloans for short programs, and public investment in programs that target people most likely to be in the have-not category. These are specific steps we can take to reduce the social impact of the AI job divide while still encouraging innovation.

Rethinking Taxes, Government Finances, and Institutions to Support Education

We can't separate education from the financial arrangements that pay for it. If AI concentrates capital in investment rather than wages, then traditional payroll taxes and income taxes on labor will become weaker as stable sources of revenue. That puts funding for public schools, higher education subsidies, and the training programs essential to helping people transition at risk. That's why the new financial framework includes measures such as taxing computing power or the revenue generated by AI systems to capture some of the additional revenue.

There are specific policy ideas that align with education goals and fiscal realities. One is to create a dedicated AI dividend or investment fund that uses a small portion of AI profits to support a national training fund for community colleges and apprenticeship programs. Another is to change employer incentives so that companies that benefit from automation contribute to local retraining efforts through levies, pooled wage funds, or tax credits tied to successful retraining outcomes. A third is to view public investments in lifelong learning as infrastructure, like roads or broadband. Learning systems need steady, predictable funding that can survive economic ups and downs.

Some people will say that these steps may discourage innovation or create heavy burdens for companies. Those are reasonable concerns. But policy can be designed to be fair and neutral. A small levy on computing power or a tax on profits from AI systems can be adjusted to protect research and development while still generating money for retraining and social safety nets. It's important for education leaders to ensure that any new revenue streams are used specifically for training, credential portability, and transition services, rather than being mixed into general spending where they can be easily diverted.

Responding to Concerns: Faith In Productivity, Faith in Retraining, and Political Practicality

Some people are optimistic and will say that AI will create new jobs and that technology has always led to net gains for workers over time. That's possible, but it's important to look at the details. Past technologies often reallocated labor across industries and locations. AI replaces cognitive tasks and concentrates money through digital platforms and intellectual property. Recent studies suggest that the immediate impact of AI has been to reallocate tasks within companies and to create gains for capital owners rather than to create many new jobs across the economy. So, it's risky to rely on historical comparisons without making structural policy changes.

Another argument is that retraining can address most displacement. Retraining is necessary but not enough. It helps people who can get access to quality programs and who are in areas where there's demand for new skills. But for many workers who are geographically isolated, older, or have caregiving responsibilities, retraining without income support and job placement services is not a complete solution. This is where coordinated policy is important. We need to combine training with wage protection, portable benefits, and information on employers' needs so that retraining leads to real jobs, not just inflated credentials. Finally, political practicality is a real barrier. People with a lot of money have incentives to slow down or shape reforms that would reduce their gains. That's why education leaders and administrators need to be proactive. Schools and colleges can form alliances with labor groups, regional businesses, and civic organizations to create durable local systems that deliver retraining at scale. These alliances change the political situation. When local economies see clear paths from public training to business hiring, the argument for shared investment becomes stronger. The alternative is a collection of large but ineffective national plans that never reach the workers who are most at risk.

Base Education Policy on Reality, Not Just Optimism

We commenced with a simple idea: the AI job divide is forming now, and it's dividing the economy into those who have a lot, those who have enough, and those who don't have enough. That classification is important because it indicates where policy should focus. It's not only about teaching people new skills, but also about remaking the institutions that fund learning, support transitions, and capture some of the money AI creates for public goods. For educators, this means changing curricula to focus on economic context, modular credentials, and human-monitoring skills. For decision-makers, it means modernizing tax systems and creating durable local retraining systems. For administrators, this means forming partnerships across sectors and ensuring that training programs deliver measurable hiring outcomes.

If we don't act, we're likely to end up with a stable economy with a growing GDP but weakened social foundations. There will be fewer taxpayers funding schools, more families relying on weak private retraining markets, and sharp regional divides where opportunities are concentrated around AI hubs. The practical approach is to treat education and government finance as two parts of the same response. Let's redirect a portion of AI profits toward lifelong learning, make credentials portable across areas, and design fair, modest taxes on AI that fund training and transition support. These steps are feasible, politically defensible, and morally urgent. They won't stop disruption, but they can make sure that disruption doesn't turn into permanent exclusion.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of the Swiss Institute of Artificial Intelligence (SIAI) or its affiliates.

References

Axios (2026) AI rush creates rarified class of “Have-Lots”. 12 January.

Brookings Institution (2026a) Future tax policy: A public finance framework for the age of AI. Washington, DC: Brookings Institution.

Brookings Institution (2026b) Public finance in the age of artificial intelligence: A primer. Washington, DC: Brookings Institution.

Economy.ac (2026) Corporate profit concentration and labor share decline in the AI era. Economy.ac Research Brief, February.

International Labour Organization (2024) World Employment and Social Outlook: Trends 2024. Geneva: ILO.

Organisation for Economic Co-operation and Development (2024) The impact of artificial intelligence on productivity, distribution and growth. Paris: OECD Publishing.

Similar Post

When War Weakens Democracy: Why Conflict Is an Opportunity for Durable Institutional Rollback

War weakens democracy not by necessity, but by creating incentives for leaders to centralize power The erosion of courts, media, and civil liberties often outlasts the conflict itself Protecting democratic institutions during crisis is a policy choice, not a luxury

When Cheap Imports Break: Why Trade Resilience Must Replace Naïve Efficiency

Trade resilience has real economic value in a volatile world Protection can act as temporary insurance, but productivity is the stronger long-term hedge Policy must price trade risk honestly and invest in domestic capacity

Global f

India Growth Momentum and the Global Power Shift

India growth momentum is strong, but its durability depends on education reform Investment gains will fade unless skills systems scale with industry demand The real test is whether growth becomes long-term capability

India is in

The Cost of Hurry: Why the speed of AI adoption is a policy choice with winners, losers—and no shortcuts

The Cost of Hurry: Why the speed of AI adoption is a policy choice with winners, losers—and no shortcuts

Published

Modified

AI speed is a policy choice, not a universal race Rushing adoption can deepen inequality and strain education systems Measured AI adoption builds lasting capacity and stability

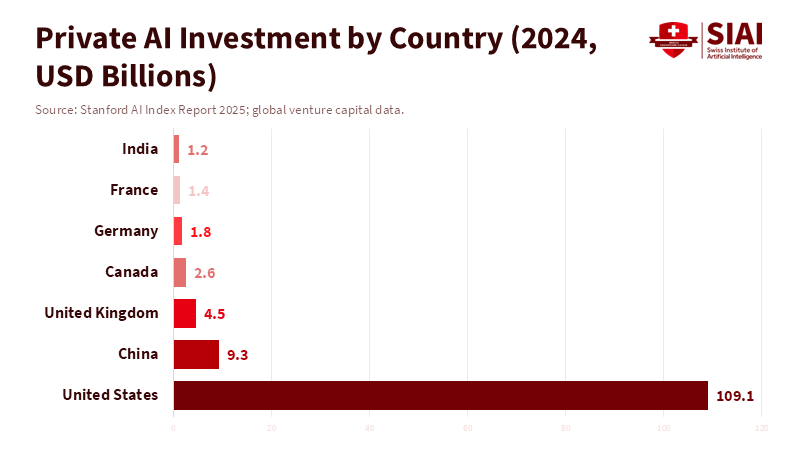

In 2024, the United States saw a substantial amount of private capital flow into AI, far surpassing that of any other country. Approximately $109.1 billion in new private funding was invested, about 12 times the $9.3 billion China invested that year. This has led to a concentration of talent, computing power, and market power in a small group of leading companies. This unevenness is significant because being a leader in tech isn't usually about just one brilliant idea. It depends on having a robust system that includes money, substantial power, talented people, and the ability to try things that might be expensive failures. When you have a lot of resources in one place, you can move fast. But if you don't have those resources, trying to move fast can be a disaster. So, every education official, college leader, and job market planner needs to think not about whether AI will change things, but how quickly we can adapt our systems to handle those changes. Trying to go fast without having the right resources means sacrificing important things in the future to look good today. It leaves teachers, experienced workers, and entire economies struggling to keep up with a disadvantage.

Why it's important to think about how fast we adopt AI

People often talk about adopting AI like it's an all-or-nothing situation: either you jump in, or you get left behind. But that's not the whole story. How fast you adopt AI can actually make existing advantages even bigger. If you already have a lot of money, strong research centers, and plenty of cloud computing, you can not only use AI models, but also mold the standards, data processes, and even the kind of talent that's considered normal. This creates a situation where the first movers set the rules. What's taught in schools, the qualifications people need, and even job descriptions start to reflect the tools and methods used by those leading companies, instead of what most schools or firms can actually manage. For schools that need to serve everyone, the pressure is on to copy those tools in the classroom. This can mean buying cloud services, installing expensive equipment, and hiring a few specialists. Meanwhile, the majority of teachers don't get the support they need. This turns updating education into a fancy project for a select few, instead of a real improvement for everyone.

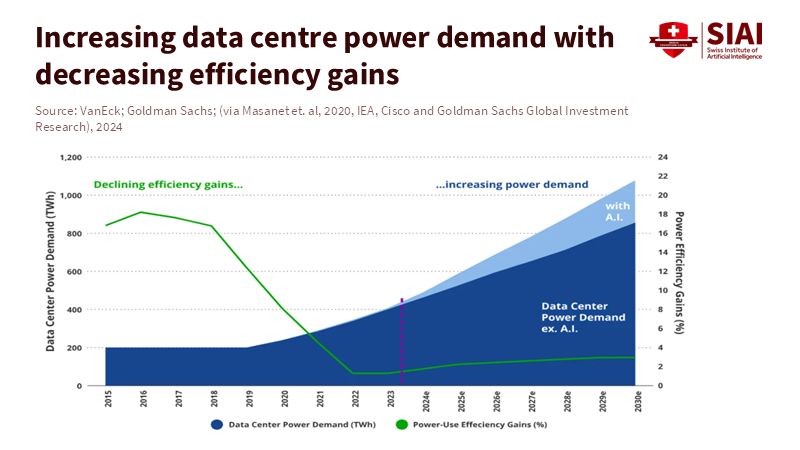

There's another reason why speed is important: money. To go all-in on AI, countries need to invest in data centers, skilled trainers, software licenses, and a reliable power supply. If governments or universities focus on short-term benefits instead of building a solid base, they could end up with financial problems later on. Public budgets are already tight, and adopting AI quickly can increase costs, which can be hard to see at first. At the same time, the benefits, like better productivity, new courses, and improved learning, usually take time to show up. If a government borrows money to build a fancy AI center but can't find the people to run it or the power to keep it going, that center becomes a waste of money. This is already happening in places where private AI investment is high, but public finances are stretched. Going fast isn't the same as being able to include everyone in a way that's affordable.

A world of different speeds for AI adoption

The world is dividing into three groups regarding AI. The first is the superpowers that are racing ahead. In this case, companies and countries are competing intensely to be the leader in AI platforms. They're willing to spend a lot of money to win, even if it's risky. For them, moving fast makes their lead even bigger. Their education systems will likely prioritize state-of-the-art research and retraining programs for a select few. This could increase inequality within those countries, but they can handle the risks and costs of leading the way in AI. They see their investments as part of a larger plan, not just one-time experiments.

The second group is small and middle-income countries. For them, trying to keep up with the leaders can be a huge struggle. They face three problems: they lack sufficient private capital or cloud and chip infrastructure to reduce costs. They also have gaps in skills, especially among older workers. This means that rapid adoption of machines and AI can displace older workers, and they may find it difficult to transition to a new career. According to research by Neumark, Burn, and Button, there is strong evidence that older women, particularly those approaching retirement age, face age discrimination when trying to find jobs. Additionally, these countries frequently have limited budgets, so allocating funds for AI infrastructure can reduce the money available for social and educational programs. This creates a difficult situation: they must choose between allocating funds to emulate AI leaders and investing in foundational systems. The infrastructure reality makes the pace question unavoidable. Data centre electricity demand is accelerating sharply, even as efficiency gains flatten compared to earlier years. Rapid AI scaling now requires sustained capital, reliable grids, and long-term energy planning — resources not evenly distributed across countries.

The third group is a large middle zone, which is everyone else. These countries aren't racing ahead, but they're also not maintaining their basic capabilities. Without a clear plan, they risk slowly falling behind. Public research doesn't receive sufficient funding, companies lack access to high-quality AI models, and the job market loses middle-skill jobs without providing adequate options for people to find new work. These countries become dependent on AI services provided by others and lose control over standards and data use. The message for them is clear: going fast without having the resources to do it right isn't a way to become competitive.

These three groups aren't about judging which is better or worse. It's about understanding the financial and organizational realities they face. Moving fast makes sense if you control the infrastructure and can afford to take losses. But for everyone else, the right question isn't How fast can we adopt AI? but How can we learn at a pace that allows us to adapt? Going slow doesn't mean doing nothing. It means taking steps in the right order: first, invest in helping teachers understand technology, then redesign courses to focus on solving problems, and finally provide reliable power and internet access. Then, you can start using AI platforms. This approach reduces the risk of wasting money and makes it possible to speed things up later in a way that makes sense.

What the speed of AI adoption means for educators, administrators, and policymakers

Educators need to determine what's most important to teach about AI. A good rule is to focus on skills that will last. Critical thinking, judgment, supervising AI models, and understanding data are skills that will be useful regardless of the tools available. Courses that teach people how to work with AI, like how to write instructions for AI, how to evaluate what AI produces, and how to oversee decisions made with AI, are helpful. But they shouldn't replace a solid foundation in other subjects. Buying many specific classroom tools can trap schools into using them, and it can be hard for teachers to adopt them. Instead, schools should focus on training teachers and creating course materials that gradually include AI tools, with ways to measure how well they're working.

Administrators and finance teams need to consider how quickly they plan to adopt AI when setting budgets. When universities or school systems are planning to buy AI tools, they should carefully consider the costs of running them. This includes energy use, license renewals, technical staff, and equipment replacement. Rapidly adopting AI often shifts costs from one-time investments to ongoing expenses, which can be difficult to cut later. A better approach for systems with limited money is to take things slowly. Start with small experiments, measure how well they're working and how they affect the job market, train people, and then expand. International cooperation should focus on building capabilities rather than just donating equipment. Donating equipment without training and a budget to keep it running creates dependence, not self-reliance.

Policymakers need to think about how people will transition to new jobs, especially older workers. Studies show that older workers face challenges finding new jobs as automation and AI replace their roles. Just retraining older workers quickly often doesn't work because of difficulties related to learning, location, and finances. Better approaches include offering options for gradual retirement, retraining people for local jobs, and offering credentials that recognize their prior experience. Social insurance and wage subsidies can provide support as people adjust, and programs that help them find new jobs can increase their chances of employment. These measures cost money, but they're cheaper than the cost of mass unemployment and social problems caused by adopting AI too quickly.

A practical plan: taking things in the right order, being honest about costs, and adopting AI at a measured pace

A plan that treats speed as a tool, not a race, has three main parts. First, take things in the right order. Focus on providing reliable power, universal internet access, teacher training, and open standards. These are the things that make adopting AI later on more effective and affordable. Second, be honest about the costs. Consider the total cost of owning AI tools, not just the initial investment. Include energy, staff, and replacement cycles. If a government borrows money to build fancy AI centers without a realistic plan for running them, it creates problems for the future. Third, regulate access wisely. Make sure that purchasing favors systems that can work with other systems, encourage shared computing facilities, and require educational licenses that allow for research, reuse, and evaluation. These steps lower the initial cost and increase the chances for learning.

International partners and organizations can help by shifting their focus from donating equipment to building lasting capabilities. Grants or loans should fund teacher training, regional computing centers, and curriculum development, rather than just individual AI centers at universities. When offering financial support, set measurable goals, such as teacher certification rates, improvements in learning outcomes, and job placement rates, so that success can be measured. This is how to turn good intentions into real benefits for the public.

Finally, the speed of AI adoption must be a democratic decision. Decisions about how quickly to adopt AI are political choices that affect different groups and regions. Open discussions among teachers' unions, universities, industry, and the public can reduce the risk that AI adoption becomes a project for a select few. When educators and communities are involved, the pace is more likely to protect jobs while allowing innovation to continue.

Remember that substantial private investment has already created a situation in which only a few can afford to move quickly with AI. For everyone else, trying to keep up is a risky bet. The alternative isn't to reject AI, but to choose a responsible pace, proceed in the right order, budget honestly for operating costs, and protect workers. Educators, administrators, and policymakers should focus on supporting teachers and on teaching skills that endure. Getting the pace wrong will not only delay the benefits of AI but will also worsen inequality, create stranded assets, and weaken the ability to manage technology. Getting it right means using a measured pace as a plan for inclusion, making decisions based on evidence, and focusing on the good of the public.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of the Swiss Institute of Artificial Intelligence (SIAI) or its affiliates.

References

BBC News (2024) US power grid faces strain from AI-driven electricity demand. BBC News.

Brookings Institution (2024) Why Africa should sequence, not rush into AI. Brookings Institution.

International Monetary Fund (IMF) (2025) Global Debt Monitor 2025. International Monetary Fund.

Organisation for Economic Co-operation and Development (OECD) (2024) Promoting Better Career Choices for Longer Working Lives. OECD Publishing.

Organisation for Economic Co-operation and Development (OECD) (2025) Emerging divides in the transition to artificial intelligence. OECD Publishing.

Reddit (2025) The AI is so fucked you can’t play small nations. EU5 Discussion Forum.

Stanford Institute for Human-Centered AI (HAI) (2025) AI Index Report 2025. Stanford University.

VanEck (2024) Who’s winning the AI rush?. VanEck Vectors Insights.

Similar Post

Tariffs, Time, and Tradecraft: Why “America First Tariffs” Are Rewriting Policy, Not Economics

America First tariffs avoided an immediate recession but shifted trade into geopolitical strategy Short-term stability hides real household costs and long-run productivity risks The real test is whether tariffs build lasting capacity without weakening institutions

Stable Savings, not Speculative Savings: Why the Crypto Affordability Myth won't Fix U.S. Household Strain

Speculation cannot fix structural affordability Stablecoins may look stable but can shift systemic risk Real reform requires stronger incomes and safer credit systems

In 2024, a study found that about 63% of